Resources

Rust Official

Rust Lang Home

This Week in Rust

Rustup Components History

- Rustup packages availability on x86_64-unknown-linux-gnu

- rust-lang/rustup-components-history: Rustup package status history

Crates.io

Rust Blog

RustC Dev Guide

- rust-lang/rustc-dev-guide: A guide to how rustc works and how to contribute to it.

- About this guide - Guide to Rustc Development

Rust Vim

Module Arch

- core::arch - Rust

- rust-lang/stdarch: Rust’s standard library vendor-specific APIs and run-time feature detection

Rust Lang - Compiler Team

- Introduction | Rust Lang - Compiler Team

- rust-lang/compiler-team: A home for compiler team planning documents, meeting minutes, and other such things.

Standard Library Developers Guide

- About this guide - Standard library developers Guide

- rust-lang/std-dev-guide: Guide for standard library developers

awesome series

rust-unofficial/awesome-rust

rust-embedded/asesome-embedded-rust

rust-in-blockchain/awesome-blockchain-rust

TaKO8Ki/awesome-alternatives-in-rust

RustBegginers/awesome-rust-mentors

awesome-rust-com/awesome-rust

The Rust Programming Language

by Steve Klabnik and Carol Nichols, with contributions from the Rust Community

This version of the text assumes you’re using Rust 1.62 (released 2022-06-30) or later. See the “Installation” section of Chapter 1 to install or update Rust.

The HTML format is available online at

https://doc.rust-lang.org/stable/book/

and offline with installations of Rust made with rustup; run rustup docs --book to open.

Several community translations are also available.

This text is available in paperback and ebook format from No Starch Press.

🚨 Want a more interactive learning experience? Try out a different version of the Rust Book, featuring: quizzes, highlighting, visualizations, and more: https://rust-book.cs.brown.edu

Rust Language Cheat sheet

Rust Cheat Sheet & Quick Reference

chatGPT Rust Prompts

Rust Grammar Points Experience & Pitfalls

Suppose you are a rust programmer with five years of development experience. During the development process, you deeply feel the difference between rust and python and golang, as well as the characteristics of rust itself. Please list 10-15 features of rust in the development process, and inform them in the following format:

Grammar Points: Ownership Mechanisms

Usage experience: variables can only be used once, if you want to use it multiple times, you have to consider using references. Under the ownership mechanism, factors such as borrowing situation and life cycle have to be considered

Common pitfalls: After a variable is assigned once, it often fails to compile, because the ownership mechanism must be considered

-

Ownership Mechanisms

- Usage experience: Variables can only be used once; if you want to use them multiple times, you have to consider using references. Under the ownership mechanism, factors such as borrowing situation and life cycle have to be considered.

- Common pitfalls: After a variable is assigned once, it often fails to compile because the ownership mechanism must be considered.

-

Memory Safety

- Usage experience: Rust has a strict memory safety mechanism that prevents common programming errors such as null pointer dereferencing, buffer overflows, and use-after-free errors.

- Common pitfalls: The strict memory safety mechanism can sometimes make the development process more cumbersome and may require more thought and planning.

-

Error Handling

- Usage experience: Rust has a robust error handling mechanism that uses the Result and Option enums to provide detailed error messages and handle errors gracefully.

- Common pitfalls: If not handled properly, errors can propagate throughout the program and cause unexpected behavior.

-

Performance

- Usage experience: Rust is designed to be a high-performance language, with a focus on low-level control and optimization. It has zero-cost abstractions and supports low-level programming features such as inline assembly.

- Common pitfalls: Writing high-performance code in Rust can sometimes require more complex code and a deeper understanding of how the underlying system works.

-

Traits

- Usage experience: Rust’s trait system allows for powerful abstractions and code reuse. Traits define behavior that types can implement, allowing for generic programming and code that can work with different types.

- Common pitfalls: The trait system can be complex and may require a deeper understanding of Rust’s type system.

-

Cargo Package Manager

- Usage experience: Rust comes with a built-in package manager called Cargo, which makes it easy to manage dependencies, build projects, and publish libraries.

- Common pitfalls: Managing dependencies and dealing with versioning can still be challenging, especially in large projects.

-

Pattern Matching

- Usage experience: Rust’s pattern matching mechanism allows for concise and expressive code, making it easy to handle complex data structures and control flow.

- Common pitfalls: The syntax for pattern matching can be verbose and may require more code than other languages.

-

Macros

- Usage experience: Rust’s macro system allows for powerful metaprogramming, enabling code generation and manipulation at compile time.

- Common pitfalls: Macros can be difficult to write and understand, and may introduce unexpected behavior if not used carefully.

-

Macros 2.0

- Usage experience: Rust’s Macros 2.0 system allows developers to write more powerful and flexible macros, making it easier to generate code that is both concise and expressive.

- Common pitfalls: Macros 2.0 can be challenging to write and debug, and can lead to unexpected behavior if not used properly.

-

Functional Programming

- Usage experience: Rust supports functional programming concepts such as closures, higher-order functions, and immutable data structures, allowing for expressive and concise code.

- Common pitfalls: The syntax and terminology of functional programming can be unfamiliar to some programmers, and functional code may not always be as performant as imperative code.

-

Lifetime Annotations

-

Usage experience: Rust requires explicit lifetime annotations to manage memory allocation and deallocation, ensuring that the code is safe and efficient.

-

Common pitfalls: Lifetime annotations can be confusing and error-prone, especially for complex data structures and code.

-

-

Async/Await

- Usage experience: Rust has native support for asynchronous programming, with the async/await syntax making it easier to write asynchronous code that is both efficient and easy to reason about.

- Common pitfalls: Async/await syntax can be difficult to understand for developers unfamiliar with asynchronous programming, and it can also introduce complex control flow.

-

Unsafe Code

- Usage experience: Rust allows developers to write unsafe code for situations where low-level control and optimization are necessary, but it must be carefully reviewed and handled with caution.

- Common pitfalls: Unsafe code can be challenging to write and debug, and it can introduce security vulnerabilities and undefined behavior.

-

Trait Bounds

- Usage experience: Rust’s trait bounds allow developers to specify constraints on generic types, ensuring that they have the required functionality to be used in a particular context.

- Common pitfalls: Specifying trait bounds can be challenging, especially for complex code, and can lead to confusing error messages.

-

Smart Pointers

- Usage experience: Rust provides several types of smart pointers such as Rc and Arc, which allow for shared ownership and reference counting, making it easier to manage complex data structures.

- Common pitfalls: Using smart pointers can introduce overhead and performance penalties, and can also make the code more complex and error-prone.

-

Type Inference

- Usage experience: Rust has a powerful type inference system that allows the compiler to infer the type of a variable, making the code more concise and readable.

- Common pitfalls: Type inference can sometimes lead to unexpected behavior, especially when combined with complex expressions or nested data structures.

-

Algebraic Data Types

- Usage experience: Rust’s algebraic data types such as enums and structs with named fields provide a flexible and expressive way to define data structures and their behavior.

- Common pitfalls: Algebraic data types can be challenging to work with, especially for developers unfamiliar with functional programming concepts.

-

Trait Objects

- Usage experience: Rust’s trait objects allow for dynamic dispatch and polymorphism, making it easier to write generic code that can work with different types.

- Common pitfalls: Using trait objects can lead to performance penalties, and can also make the code more complex and harder to reason about.

-

Iterators

- Usage experience: Rust’s iterator system provides a flexible and expressive way to work with collections and sequences, making it easier to write concise and efficient code.

- Common pitfalls: Iterators can be challenging to work with, especially for developers unfamiliar with functional programming concepts.

-

Benchmarking

- Usage experience: Rust has a built-in benchmarking framework that allows developers to measure the performance of their code and optimize it accordingly.

- Common pitfalls: Benchmarking can be time-consuming and can lead to over-optimization, resulting in code that is hard to understand and maintain.

-

Closures

- Usage experience: Rust’s closures allow developers to define anonymous functions that can capture and manipulate variables from their surrounding environment.

- Common pitfalls: Closures can be challenging to understand, especially for developers unfamiliar with functional programming concepts.

-

Concurrency

- Usage experience: Rust’s concurrency primitives such as threads and channels allow developers to write concurrent code that is both safe and efficient.

- Common pitfalls: Concurrency can introduce complex control flow and race conditions, and can be challenging to debug and optimize.

-

Documentation

- Usage experience: Rust’s documentation system encourages developers to write comprehensive and readable documentation, making it easier for others to understand and use their code.

- Common pitfalls: Documentation can be time-consuming and can lead to neglecting code quality and maintainability.

-

Testing

- Usage experience: Rust’s testing framework makes it easy to write and run tests, ensuring that the code is correct and reliable.

- Common pitfalls: Testing can be time-consuming and can lead to over-testing, resulting in code that is hard to understand and maintain.

-

Memory Management

- Usage experience: Rust’s memory management system ensures that the code is both safe and efficient, with no runtime overhead or garbage collection.

- Common pitfalls: Memory management can be challenging to understand, especially for developers unfamiliar with low-level programming concepts.

-

Functional Programming

- Usage experience: Rust’s functional programming features such as higher-order functions and closures allow developers to write concise and expressive code.

- Common pitfalls: Functional programming can be challenging to understand, especially for developers unfamiliar with functional programming concepts.

-

Foreign Function Interface (FFI)

- Usage experience: Rust’s FFI allows developers to call functions from other programming languages and libraries, making it easier to integrate Rust code with existing systems.

- Common pitfalls: FFI can introduce security vulnerabilities and undefined behavior, and can be challenging to debug and optimize.

-

Unsafe Rust

- Usage experience: Rust’s unsafe features allow developers to write low-level code that bypasses the safety checks of the compiler, making it possible to write code that is both safe and fast.

- Common pitfalls: Unsafe Rust can introduce memory unsafety and undefined behavior, and can be challenging to debug and optimize.

The length of video cannot over 3 hours

Rust Crash Course | Rustlang

- Rust Crash Course | Rustlang

- Introduction to Rust Programming Language

- Installing Rust and Setting Up the Environment

- Introduction to Print Line Command and Formatting

⭐️ Basic Formatting with Multiple Placeholders⭐️ Placeholder Traits⭐️ Debug Traits- Variables

- Introduction to Rust Programming Language

- Rust Primitive Types

⭐️ Strings in Rust- Working with Strings

- Working with Tuples

- Tuples and Arrays in Rust

⭐️ Slicing Arrays and Using Vectors⭐️ Mutating Values in Vectors- Conditionals

- Loops

- Introduction to Loops and Functions

- Rust Functions

- Pointers and References

- Rust Basics: References and Structs

- Creating a Struct in Rust

- Structs

⭐️ Introduction to Structs- Enums

- Functions with Enums

- Command Line Arguments

- Introduction to Command Line Applications

- Conclusion

Introduction to Rust Programming Language

Section Overview: This video is an introductory course on the fundamentals and syntax of the Rust programming language. The instructor will cover what Rust is, its relevance in web development, and how it compares to other systems languages.

What is Rust?

- Rust is a fast and powerful programming language known for being a systems language.

- It’s best suited for building drivers, compilers, and other tools that programmers use in development.

- Rust is becoming relevant in web development because of WebAssembly, which allows us to build secure, portable, and fast web applications using languages like C++ and Rust.

⭐️Garbage Collection

- One of the biggest advantages of Rust is that it doesn’t have garbage collection.

- In JavaScript, for example, garbage collection can take multiple seconds depending on the program.

- With languages like C++ you have to manage all memory allocation yourself which makes programming much harder.

- In contrast with both these approaches, Rust checks memory usage only when needed. If the heap gets close to being full or above some threshold it will then look for variables to free up memory.

Cargo Package Manager

- Rust has its own package manager called Cargo which is similar to NPM for Node.js or Composer for PHP.

Installing Rust and Setting Up the Environment

Section Overview: This section covers how to install Rust, set up the environment, and use some of the basic utilities.

Installing Rust

- To install Rust, run the installer and hit one to proceed with the default installation.

- If

rustup --versionreturns “not found,” restart your terminal.

Basic Utilities

- rustup is a version manager that can be used to check for updates with rustup update.

- Russ C is the compiler.

- Cargo is the package manager.

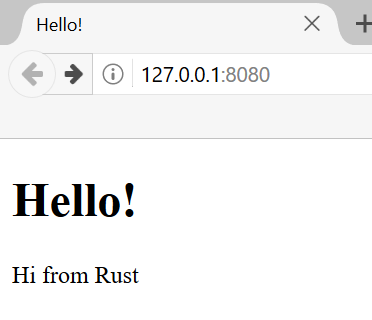

Creating a Rust File and Compiling It

- Install the Rust RLS extension in VS Code for code completion and linting.

- Create a new file called hello.rs with an entry point function called main().

- Use println!() to print out “Hello World.”

- Compile it using rustc hello.rs and run it using ./hello.

Initializing a Project with Cargo

- Initialize a project in an existing folder using cargo init.

- The project structure includes a Cargo.toml file for application info and dependencies, a .gitignore file, and a source folder for all Rust code.

- Use cargo run to compile and run your project. Use cargo build to just build it. Use cargo build –release for production optimization.

Introduction to Print Line Command and Formatting

Section Overview: In this section, the instructor introduces the print line command and formatting in Rust programming language.

Creating a Function in Print File

- To create a function in the print file, use

pubwhich means public. - Create a run function for each file to run it.

- Use

printLn!to print to console.

⭐️Running the Function in Main RS Files

- Use

modand then the name of the file above the main function. - Use

print::function_nameto call the function.

Basic Formatting

- Use curly braces as placeholders for variables or numbers that need replacement.

- Use double quotes for strings.

- Save and run code using cargo run.

⭐️Basic Formatting with Multiple Placeholders

Section Overview: In this section, we learn how to format multiple placeholders using Rust programming language.

Using Multiple Placeholders

- Add multiple placeholders by adding more curly braces.

- Replace each placeholder with its corresponding parameter index number.

Positional Arguments

- Use positional parameters when using variables twice or more times in a string.

- Add index numbers inside curly braces according to their position in parameters list.

Named Arguments

- Use named parameters instead of positional ones when you have many arguments.

- Assign names before values separated by an equal sign.

⭐️Placeholder Traits

Section Overview: In this section, we learn about placeholder traits available in Rust programming language.

Binary Trait(:B)

- The binary trait is represented by :B

- Used for converting integers into binary format

Hexadecimal Trait(:X)

- The hexadecimal trait is represented by :X

- Used for converting integers into hexadecimal format

Octal Trait(:O)

- The octal trait is represented by :O

- Used for converting integers into octal format

⭐️Debug Traits

Section Overview: In this section, we learn how to use the print line function and tuples to print multiple values. We also learn how to do basic math.

Using Print Line Function

- Use

print linefunction with a colon and a question mark. - Put in multiple values using curly braces.

- Example:

print line!("{:?} {} {}", (10, true, "hello"));

Basic Math

- Use

print linefunction with an expression. - Example:

print line!("10 + 10 = {}", 10 + 10);

Variables

Section Overview: In this section, we learn about variables in Rust. Variables are immutable by default and Rust is a block-scoped language.

Creating Variables

- Use the

letkeyword to create variables. - Example:

let name = "Brad"; - Immutable by default.

Mutable Variables

- Add the keyword

mutto make variables mutable. - Example:

let mut age = 37; - Can reassign value later.

Constants

- Use the keyword

constfor constants. - Must explicitly define type.

- Usually all uppercase.

- Example:

const ID:i32 = 001;

⭐️Assigning Multiple Variables at Once

- Use commas to assign multiple variables at once.

- Example:

#![allow(unused)] fn main() { let (x, y, z) = (1, 2, 3); println!("x = {}, y = {}, z = {}", x, y, z); }

Introduction to Rust Programming Language

Section Overview: In this section, the instructor introduces Rust programming language and covers topics such as assigning variables, data types, and how Rust is a statically typed language.

Assigning Variables

- Multiple variables can be assigned at once in Rust.

- The

pub function runis used to run the program. - Integers come in signed and unsigned forms with different bit sizes. Floats have 32 and 64 bits. Booleans are

represented by

bool. Characters are represented bychar. - ⭐️Strings are not primitive types in Rust.

- ⭐️Tuples and arrays are also primitive types.

⭐️Data Types

- Vectors are growable arrays while arrays have fixed lengths.

- Rust is a statically typed language which means that it must know the types of all variables at compile time. However, the compiler can usually infer what type we want to use based on the value and how we use it.

- Explicit typing can be done using a colon followed by the desired type.

- The maximum size of integers can be found using

STD::i32::MAXorSTD::i64::MAX.

Boolean Expressions

- Booleans can be set explicitly or inferred from expressions.

- A boolean expression evaluates to either true or false depending on whether the condition is met.

Rust Primitive Types

Section Overview: In this section, the speaker discusses primitive types in Rust, including char and Unicode.

Char and Unicode

- A char is a single character that can be represented with single quotes.

- Unicode characters can also be used by specifying them with a slash u and curly braces.

- Emojis are also Unicode characters that can be used in Rust.

⭐️Strings in Rust

Section Overview: In this section, the speaker discusses strings in Rust, including primitive strings and string types.

Creating Strings

- There are two types of strings in Rust: primitive strings (immutable fixed-length) and string types (growable heap-allocated data structure).

- To create a primitive string, use single or double quotes.

- To create a string type, use

String::from()method.

Modifying Strings

- The

push()method adds a single character to the end of a string. - The

push_str()method adds multiple characters to the end of a string. - The

len()method returns the length of a string. - The

capacity()method returns the number of bytes that can be stored in a string. - The

is_empty()method checks if a string is empty.

Working with Strings

Section Overview: In this section, the instructor demonstrates how to work with strings in Rust.

Checking for Substrings and Replacing Them

- Use

containsmethod to check if a string contains a substring. - Use

replacemethod to replace a substring with another string.

Looping Through Strings

- Use a for loop and

split_whitespacemethod to loop through a string by whitespace.

Creating Strings with Capacity

- Use

with_capacitymethod to create a string with a certain capacity. - Use

pushmethod to add characters to the created string.

Assertion Testing

- Use assertion testing using the

assert_eq!()macro to test if something is equal to something else.

Working with Tuples

Section Overview: In this section, the instructor demonstrates how to work with tuples in Rust.

Introduction to Tuples

- A tuple is a group of values in Rust.

Creating and Accessing Tuples

- Create tuples using parentheses and commas between values.

- Access tuple elements using

dot notationandindex numbers starting from 0.

Destructuring Tuples

- Destructure tuples into individual variables using pattern matching syntax.

Tuple Methods

- The

.len()method returns the number of elements in a tuple.

Tuples and Arrays in Rust

Section Overview: In this section, the speaker introduces tuples and arrays in Rust programming language. They explain how to create tuples and access their values using dot syntax. The speaker also demonstrates how to create arrays with fixed lengths and change their values.

Creating Tuples

- To create a tuple, use parentheses and separate the values with commas.

- Use dot syntax to access tuple values by index.

- Tuples are immutable by default but can be made mutable using

mutkeyword.

Creating Arrays

- Arrays have a fixed length that must be specified during creation.

- Use square brackets to define an array’s elements.

- Access array elements using zero-based indexing.

- Arrays are stack allocated, meaning they occupy contiguous memory locations.

⭐️Modifying Array Values

- Use

mutkeyword to make an array mutable. - Change an array value by assigning a new value at its index.

⭐️Debugging Arrays

- Use

println!()macro with debug trait ({:?}) to print entire arrays. - Get the length of an array using

.len()method. - Get the amount of memory occupied by an array using

std::mem::size_of_val(&array)method.

⭐️Slicing Arrays and Using Vectors

Section Overview: In this section, the instructor demonstrates how to slice arrays and use vectors in Rust.

Slicing Arrays

- To get a slice from an array, create a mutable variable with the type defined as

&[i32]. - Use brackets to specify the range of elements you want to include in the slice.

- The resulting slice can be printed using the debug trait.

Using Vectors

- Vectors are resizable arrays that can have elements added or removed.

- To define a vector, use

Vec<i32>instead of[i32]for the type definition. - Use

.push()to add elements to a vector and.pop()to remove them. - A for loop can be used to iterate through all values in a vector. Use

iter_mut()to mutate each value individually.

⭐️Mutating Values in Vectors

Section Overview: In this section, the instructor shows how to mutate values in vectors by multiplying each element by:

- Use a for loop with

iter_mut()on a vector. - Multiply each element by 2 using

*=operator. - Print out the entire vector using debug trait.

Conditionals

Section Overview: This section covers the use of conditionals in Rust.

If-else statements

- Conditionals are used to connect the condition of something and then act on the result.

- An if-else statement is used to check a condition and execute code based on whether it’s true or false.

- Use curly braces for blocks of code that should be executed when a condition is met.

- Use parentheses around conditions, but they are not required.

Operators

- Rust has several operators, including + and -.

- A boolean variable can be created using

let check_ID: bool = false;. - Types can be added to variables if desired.

⭐️Shorthand If

- Rust does not have a ternary operator like many other languages, but shorthand if statements can be used instead.

- Shorthand if statements work similarly to ternary operators in other languages.

Loops

Section Overview: This section covers loops in Rust.

Infinite Loop

- An infinite loop is a loop that runs indefinitely until it’s interrupted by an external event or action.

- In Rust, an infinite loop can be created using the

loopkeyword followed by curly braces containing the code to execute repeatedly.

While Loop

- A while loop executes as long as its condition remains true.

- The syntax for a while loop includes the

whilekeyword followed by parentheses containing the condition and curly braces containing the code block to execute.

For Loop

- A for loop iterates over a range or collection of values.

- The syntax for a for loop includes the

forkeyword followed by parentheses containing an iterator expression and curly braces containing the code block to execute.

Introduction to Loops and Functions

Section Overview: In this section, the instructor introduces loops and functions in Rust programming language.

While Loop

- A while loop is used to execute a block of code repeatedly as long as a condition is true.

- Use an if statement inside the loop to break out of it when a certain condition is met.

- Example: Print numbers 1 through 20 using a while loop with a break statement.

FizzBuzz Challenge

- The FizzBuzz challenge requires looping through numbers from 0 to 100 and printing “Fizz” for multiples of 3, “Buzz” for multiples of 5, and “FizzBuzz” for multiples of both.

- Use the modulus operator (%) to check if a number is divisible by another number.

- Example: Implementing FizzBuzz challenge using while loop with conditional statements.

For Range Loop

- A for range loop can be used to iterate over a range of values specified by the user.

- It’s similar to the for-each loop in other programming languages.

- Example: Implementing FizzBuzz challenge using for range loop.

Functions

- Functions are used to store blocks of code that can be reused multiple times throughout your program.

- They take input parameters and return output values (if necessary).

- Example: Creating functions in Rust, including defining function parameters and return types.

Rust Functions

Section Overview: In this section, we learn about Rust functions and how to use them.

Creating a Function

- To create a function in Rust, use the

fnkeyword followed by the function name. - Parameters can be passed into the function using parentheses.

- Use an arrow syntax to specify the return type of the function.

- To call a function, simply write its name followed by any necessary arguments in parentheses.

⭐️Returning from a Function

- To return a value from a Rust function, omit the semicolon at the end of the expression.

- The last expression in a Rust function is automatically returned.

⭐️Closures

- Closures are similar to functions but are more compact and can use outside variables.

- Use

letto define closures and bind them to variables. - Closures can take parameters and return values just like functions.

Pointers and References

Section Overview: In this section, we learn about pointers and references in Rust.

Primitive Arrays

- Pointers can be used with primitive arrays in Rust.

- Use

letto create variables that point to other variables or arrays.

⭐️Non-primitive Values

- Non-primitive values require references when assigning another variable to their data.

- Use an ampersand (

&) before non-primitive values when creating references.

Rust Basics: References and Structs

Section Overview: In this section, the instructor covers how to create references in Rust using the ampersand symbol and explains how to create structs in Rust.

⭐️Creating References

- To create a reference in Rust, use the ampersand symbol.

- If you want to point to a non-primitive value, you need to create a reference.

- Use the ampersand symbol before the variable name to create a reference.

Creating Structs

- Structs are custom data types used in Rust that are similar to classes.

- To create a struct, use the

structkeyword followed by the name of your struct. - Define properties or members of your struct using variables with their respective data types.

- Access properties of your struct using dot syntax.

⭐️Tuple Structs

- Tuple structs are another way of creating structs in Rust.

- They do not have named properties like traditional structs but instead rely on their order within the tuple.

- You can access tuple struct values using index numbers instead of property names.

Creating a Struct in Rust

Section Overview: In this section, the speaker creates a struct called “person” and defines functions to construct and manipulate it.

Creating a New Person

- The speaker creates a new struct called “person”.

- A function called “new” is defined to create a new person. It takes in two string parameters: first name and last name.

- The “new” function returns a person with uppercase first and last names.

Getting Full Name of Person

- A method called “full name” is created to get the full name of the person.

- The “full name” method returns a string using format! macro.

- The full name method is used to print the full name of the person.

Changing Last Name of Person

- A function called “set last name” is created to change the last name of the person.

- The set last name function mutates self and takes in one parameter: last (string).

- The set last name function is used to change the last name of Mary Doe to Williams.

Converting Name to Tuple

- A method called “to tuple” is created to convert the person’s first and last names into a tuple.

- The method returns a tuple with two strings: first_name and last_name.

Structs

Section Overview: In this section, the instructor explains how structs are used in Rust and compares them to classes in other programming languages.

⭐️Introduction to Structs

- A struct is a data type that groups together variables of different types.

- The syntax for creating a struct is

struct Name { field1: Type1, field2: Type2 }. - Structs are similar to classes in other programming languages like Python, PHP, JavaScript, and Java.

Enums

Section Overview: In this section, the instructor introduces enums and demonstrates how they can be used in Rust.

Introduction to Enums

- An enum is a type that has a few definite values.

- The syntax for creating an enum is

enum Name { Variant1, Variant2 }. - Variants are the possible values of an enum.

Using Enums

- We can create functions that take enums as parameters.

- We can use match statements to perform actions based on the value of an enum.

Functions with Enums

Section Overview: In this section, the instructor demonstrates how to create functions that take enums as parameters and use match statements to perform actions based on their values.

⭐️Creating Functions with Enums

- We can create functions that take enums as parameters by specifying the type of the parameter as the name of the enum.

- We can use match statements to perform actions based on the value of an enum.

- Match statements are similar to switch statements in other programming languages.

Command Line Arguments

Section Overview: In this section, the instructor explains how command line arguments work in Rust and demonstrates how to get them using env::args().

Introduction to Command Line Arguments

- Command line arguments are values passed into a program when it is run from the command line.

- We can use command line arguments to customize the behavior of our program.

⭐️Getting Command Line Arguments

- We can get command line arguments using the env::args() function from the standard library.

- The env::args() function returns a vector of strings containing the command line arguments.

Introduction to Command Line Applications

Section Overview: In this section, the instructor introduces how to create command line applications in Rust.

⭐️Getting Input from Command Line Arguments

- The

argsvariable is used to get input from the command line. - The first element of the

argsvector is always the target of the executable. - Additional arguments passed in are added to the

argsvector. - A specific argument can be accessed by its index in the

argsvector.

Creating a Command Line Application

- Create a variable called

commandand set it equal toargs[1]. - Use an if statement to check what command was entered and execute different code based on that command.

- Use placeholders to insert variables into print statements.

- If an invalid command is entered, print a message indicating so.

Conclusion

- Rust is a powerful language for creating command line applications.

- There is much more that can be done with Rust beyond what was covered in this video.

- The instructor plans on doing something with Web Assembly soon.

Stop Duplicating Code: Generics, Traits, Lifetimes

- Stop Duplicating Code: Generics, Traits, Lifetimes

Introduction to Generics

Section Overview: In this section, we will learn about generics and how they can be used with functions, methods, and custom types. We will also explore traits and lifetimes.

Removing Duplication

- Placeholder: Generics are like a placeholder for another type.

- Functions can help remove duplication in code.

- The syntax for generics includes an open angle bracket followed by a letter t and then a close angle bracket.

- Rust’s standard library has a compare module that includes the partial order or ord trait which is necessary for comparing custom data types.

⭐️Using Generics with Structs

- We can use generics with structs to create more flexible code.

Defining V.S. Creating: When defining a struct with generics, we need to specify the type of the generic when creating an instance of the struct.

Implementing Traits

- Shared Behavior: Traits define shared behavior between different types.

- Impl: We can implement traits on our own custom types using the impl keyword.

- Trait Bounds: Trait bounds allow us to restrict which types can be used with our generic functions or structs.

Lifetimes

- Lifetimes ensure that references remain valid for as long as they are needed.

- We use apostrophes (’) to denote lifetimes in Rust code.

- Lifetime elision rules allow us to omit explicit lifetime annotations in certain cases.

Conclusion

Section Overview: In this section, we reviewed what we learned about generics, including how they can be used with functions, methods, and custom types. We also explored traits and lifetimes.

- Generics allow us to write more flexible and reusable code.

- Traits define shared behavior between different types.

- Lifetimes ensure that references remain valid for as long as they are needed.

Generics in Type Definitions and Functions

Section Overview: In this section, the speaker introduces generics and explains how they can be used in type definitions and functions.

Using Generics in Type Definitions

- Generics can be used not only in functions but also in type definitions.

- The

pointstruct is an example of using generics in a type definition. Thetparameter is used to define the type ofxandy. - The

pointstruct can take on different characteristics depending on the types defined forxandy. - Multiple types can be defined for a struct by separating them with a comma.

⭐️Using Generics in Enums

- Enums can also use generics. The speaker provides examples of two enums from the standard library that use

generics:

Option<T>andResult<T, E>. - The generic parameter is passed as an argument when creating instances of these enums.

⭐️Using Generics in Methods

- Generics can also be used inside methods. The speaker uses the

pointstruct as an example. - When defining a method that uses generics, the generic parameter must be specified again in the implementation.

- Specific methods can be created for specific types by targeting them with their own implementations.

Mixing Types with Generics

- Mixing types with generics is possible using methods like

mix_up(). - The method takes two points as arguments, each with its own set of generic parameters.

- The output ignores one of the generic parameters while returning the other.

Introduction to Generics and Traits

Section Overview:

- In this section, the speaker introduces generics and traits in Rust programming language.

- They explain how generics work, their performance implications, and how they can be used with different data types.

- The speaker also discusses traits, which are similar to interfaces in other programming languages.

⭐️Generics

- Generics allow for code reuse by creating functions that can work with multiple data types.

- Using generics can impact performance as it requires the compiler to do more work and may result in a larger binary.

Monomorphizationis the process of turning generic code into specific code by filling in concrete types used at compile time.- The compiler outputs specific code for each data type used with a generic function.

⭐️Traits

- Traits are similar to interfaces in other programming languages.

- A trait defines a set of methods that can be implemented by custom data types.

- Custom data types implement traits using an implementation block.

- Different custom data types can have different implementations for the same trait method.

- Traits can be defined as public so they can be used in other modules.

Using Traits as Parameters

- Traits can be used as parameters in functions to allow for greater flexibility and reusability.

Rust Generics and Traits

Section Overview: This section covers the use of generics and traits in Rust programming.

⭐️Using Generics with Traits

- Rust uses generics to implement traits.

- Multiple traits can be implemented by a single generic type.

- Generic types can also implement multiple traits as parameters in a function.

- The

wherekeyword can be used to make the code more readable when implementing multiple conformances for generics.

⭐️Returning Different Concrete Types Based on Business Logic

- Functions may return different concrete types based on business logic.

- Rust does not allow returning different concrete types from a function, but this issue is covered in Chapter 17 of the Rust Programming Book(Using Trait Objects That Allow for Values of Different Types).

- A workaround for this issue is using dynamic dispatch with the

dynkeyword.

⭐️Fixing Issues with Partial and Copy Traits

- When returning values instead of references, items must be copyable or cloned.

- Dynamic dispatch can be used as a workaround for issues with partial and copy traits.

Conclusion

Overall, this section covers how to use generics and traits in Rust programming:

- including implementing multiple traits with a single generic type,

- returning different concrete types based on business logic,

- and fixing issues related to partial and copy traits.

Understanding Lifetimes in Rust

Section Overview: In this section, the speaker introduces lifetimes in Rust and explains how they are used to validate the scope of variables being passed into a function.

Introduction to Lifetimes

- Lifetimes are used to validate the scope of variables being passed into a function.

- The speaker reviews scopes and provides an example where a reference is made to a variable that has already been dropped, resulting in a dangling pointer.

- Lifetimes help get rid of dangling pointers.

⭐️Implementing Lifetimes

- The speaker provides an example of implementing lifetimes using references and explicit lifetime syntax.

- The rest compiler helps identify when lifetime parameters need to be introduced for borrowed values.

- An example is provided where lifetime parameters are added to fix errors identified by the rust compiler.

⭐️Output Lifetimes

- The speaker discusses output lifetimes and how they connect input lifetimes with output lifetimes.

- An example is provided where two strings are passed into a function, and the longest string is returned as output.

- The rest compiler identifies when output lifetimes do not match input lifetimes, resulting in borrowed values being dropped too early.

Conclusion

Overall, this section provides an introduction to lifetimes in Rust and demonstrates their importance in validating variable scopes. Examples are provided throughout to illustrate how lifetime syntax can be implemented and how the rust compiler can help identify errors related to borrowed values.

Dangling Pointers and Lifetimes

Section Overview: This section covers the concept of dangling pointers and how lifetimes are used to prevent them in Rust.

⭐️Dangling Pointers

- A result returned as a string can cause a dangling pointer, which means it gets dropped and cleaned up.

- Borrowed types as str cannot live as long as needed, causing issues with dangling pointers.

Lifetimes in Structs

- Lifetimes not only go into function parameters but also into structs.

- An example is given where an excerpt needs to live for the same amount of time as the reference it holds.

- Both the novel and excerpt need to have the same lifetime so they can be valid for the same amount of time.

⭐️Rules for Lifetime Elision

- The compiler assigns a lifetime value to each parameter that is a reference.

- If there’s exactly one input lifetime parameter, then there is a lifetime parameter assigned to all the output.

- If there are multiple input lifetime parameters but one of them is a reference to self or mutable self, then the lifetime of self is assigned to all output lifetime parameters.

⭐️Understanding Lifetimes in Rust

Section Overview: In this video, the presenter explains how lifetimes work in Rust. He covers the three elision rules and how they apply to functions and methods with multiple parameters. He also discusses how to use traits and generics with lifetimes.

Lifetime Rules for Functions

- Each parameter gets its own lifetime.

- Rule two does not apply because there are multiple parameters.

- Rule three doesn’t apply because there’s no method.

Specifying Output Lifetime

- When a function has two different parameters, it is unclear what the lifetime of the output should be.

- The programmer must manually insert the appropriate lifetime into the application.

Lifetime Rules for Methods

- Each parameter gets its own lifetime.

- Since there is more than one parameter, rule two does not apply.

- If a method references

self, then the return type must have the same lifetime asself.

Static Lifetime

staticis a special type of lifetime that applies to variables or data that needs to be around for the entirety of an application running.- If a compiler suggests using

staticbut it doesn’t seem appropriate, check if any functions used within that code need their lifetimes adjusted.

Advanced Scenarios

The presenter briefly mentions advanced scenarios where traits and lifetimes can be used together. For more information on these topics, refer to Chapter 17 of “The Rust Programming Language” book or check out the Rust reference documentation.

Overall, this video provides a clear explanation of how lifetimes work in Rust and offers helpful tips for working with them effectively.

Generated by Video Highlight

https://videohighlight.com/video/summary/JLfEiJhpTbE

From cargo to crates.io and back again(3/7h)

- From cargo to crates.io and back again(3/7h)

- Introduction

- Cargo and Registries

- Implementing the Loop

- Cargo Package

- Publishing on Crates.io

- Cargo Package and Crate Files

- Understanding the Git Pull from the Skit Index

- Transitioning to HTTP-based Sparse Registries

- Understanding the Cargo Registry

- Crates Index

- Digging into Code on Cargo Side and Crates.io Side

- Introduction

- Publishing Crate Files

- Hosting Index on GitHub

- Overview of Git Index and Cargo

- Code Structure of Cargo

- Understanding the Cargo Publish Process

- Introduction

- Definitions

- Custom Registries

- Implementing a Cargo Registry

- Naming the Cargo Index Interface

- Adding Features to Cargo Home

- Defining Publish Phases

- Rust Crate Serialization

- Summary New Function

- Add Crate Job

- Dependency Ordering and Cargo Manifests

- Simplifying the Toml Manifest

- Verifying Tarball

- Cargo Tamil Manifest Definition

- Mapping Dependencies

- Cleaning up the Code

- Understanding the Cargo Manifest

- Parsing TOML Manifests

- Understanding the Version Encoding

- Parsing Metadata

- Tidying up Definitions

- Introduction

- Cargo Tamil

- Type Definitions

- Future Difficulty

- Optimization

- Cargo Intern String Usage

- Normalized Manifest Conversion

- Package Ownership Conversion

- Generated by Video Highlight

Introduction

In this section, the speaker introduces the topic of the stream and explains that they will be tackling an implementation problem related to how cargo talks to registries.

- The speaker mentions that they will not be porting anything in this stream and will instead write a Rust program from scratch.

- They explain that they will focus on how cargo talks to registries, including crates.io and alternative registries.

- The goal is to implement a crate that can be used by both cargo and crates.io, as well as other registries.

Cargo and Registries

In this section, the speaker discusses what happens when you run cargo publish and how it interacts with registries

like crates.io.

- When you run

cargo publish, two things happen:cargo packageis run first, followed by uploading the package to a specific endpoint at crates.io. cargo packagetakes your entire source directory (excluding files in.gitignore) and creates a tarball containing all necessary files for distribution.- The speaker notes that there are different data structures involved in these steps, but they are currently defined in different repositories without sharing logic or definitions.

- This lack of integration means there is potential for mismatches between them. For example, crates.io does not check if metadata sent by cargo matches the file uploaded during publishing.

- Ideally, one crate could be created that could be used by both cargo and crates.io.

Implementing the Loop

In this section, the speaker explains their plan to implement a crate that can be used by both cargo and crates.io.

- The goal is to create a single crate that contains all necessary data structures for interacting with registries like crates.io.

- This would allow for better integration between different parts of the system and prevent potential mismatches.

- The speaker notes that they will not be implementing low-level logic for issuing HTTP requests, but rather focusing on data structures and their conversion between steps.

- They explain that this would allow crates.io to rerun cargo logic if necessary, such as when backfilling information for older packages.

Cargo Package

In this section, the speaker discusses what happens when you run cargo package.

cargo packagetakes your entire source directory (excluding files in.gitignore) and creates a tarball containing all necessary files for distribution.- The tarball includes everything in your cargo.toml include directive, except things in exclude. By default, it also includes everything next to Cargo.toml except things in .gitignore.

- The speaker notes that the default rules can be weird.

Publishing on Crates.io

In this section, the speaker discusses publishing on crates.io.

- When you run

cargo publish, two things happen:cargo packageis run first, followed by uploading the package to a specific endpoint at crates.io. - The cargo metadata sent during publishing includes information like the name and version of the crate. This is also contained in the file uploaded by cargo.

- Currently, crates.io does not check if these two match. This could lead to issues with basic sanity checking or backfilling information for older packages.

Cargo Package and Crate Files

In this section, the speaker explains what happens when you run cargo package and how it generates a .crate file.

What Happens When You Run cargo package

- Running

cargo packagedownloads dependencies from crates.io and generates a.cratefile. - The

.cratefile is essentially a.tar.gzarchive that contains all the files needed for the crate to be built. - The

.cratefile also contains metadata about the context in which the publish happened, such as the commit hash of the code at that time. - The

Cargo.toml.origfile is automatically generated by Cargo and is essentially a normalized version ofCargo.toml, removing features not used by the crate to ensure compatibility with older versions of Cargo.

Viewing Contents of a .crate File

- To view the contents of a

.cratefile, navigate to its location (usually/target/package/name-version.crate) and runtar tzf name-version.crate. - Examining the contents can help identify unnecessary files that can be excluded to make the crate smaller and faster to publish.

# Overview of the Crate API

This section provides an overview of the body of data sent by Cargo, which is essentially a manually encoded multi-part HTTP message. It includes an integer of the length of the JSON data metadata of the package as a JSON object, then an integer of the length of the crate file and then the crate file.

Body Data Sent by Cargo

- The body of data sent by Cargo is an integer representing the length of:

- The JSON data metadata for the package as a JSON object

- The crate file

- The body is essentially a manually encoded multi-part HTTP message.

Metadata in Crate API

- The metadata in Crate API includes:

- Name

- Version

- Array of direct dependencies

- Various information about those dependencies such as features and authors.

- This information can be obtained from cargo.toml in the crate file.

Validating Crates

- The server should validate crates because it cannot trust that the JSON matches what’s in the crate file.

- Parsing cargo.toml is necessary to validate crates.

# Existing Crate Type Definitions

This section discusses existing crate type definitions and their usage.

Crates.io Crate Type Definitions

- There is a crate called “crates.io” that provides definitions for each API type.

- It is intended for those who want to look like crates.io or want to talk to crates.io.

- It has other stuff included like curl and URL because it also implements talking to parts which creates.io doesn’t need.

Standalone Crate Type Definitions

- A new standalone crate will be built with the same type definitions as the crates.io crate.

- The new crate will not include unnecessary dependencies like curl and URL.

- The new crate may take a dependency on the crates.io crate.

# Cargo Publish

This section discusses what happens when cargo publish is run.

Uploading JSON to Server

- Running “cargo publish” runs a “curl put” command which uploads the JSON to the server.

Server Response

- The response from the server depends on the implementation of the registry.

- On crates.io, it goes into a database and git index.

Understanding the Git Pull from the Skit Index

This section explains what happens during a git pull from the skit index and how it updates files.

The Skit Index

- When you run

cargo fetchor update the index, it does a git pull from the skit index. - Commits of updating crates are endless and trigger when someone runs

cargo publish. - Each version is one line in this file, and each file path has a specific syntax based on crate name length.

Inside the Index Files

- Inside of the index files is one line per version, which is a JSON object that looks similar to what cargo sends to publish.

- One field that’s here but not in publish is checksum, which is a hash of the .crate file.

- Going from just published JSON to this isn’t possible without also having the .crate file.

Transitioning to HTTP-based Sparse Registries

This section discusses transitioning to HTTP-based sparse registries and how they differ from git registries.

HTTP-Based Sparse Registries

- With HTTP-based sparse registries, there’s no more updating indexes or resolving deltas.

- Cargo is transitioning to using HTTP-based sparse registries.

Understanding the Cargo Registry

This section explains how the Cargo registry works and its role in hosting a list of versions and crate files.

The Role of Indexes in Hosting Versions and Crate Files

- The indexes are responsible for hosting a list of versions and dot crate files that have checksums matching the entries.

- When cargo talks to a registry, it mainly constructs a list of available versions by parsing each line of the file, then runs the resolver to figure out which version among these should be chosen based on the dependency declaration in your cargo toml.

- Having dependencies listed in the index allows for faster full resolve of dependencies without downloading or extracting any crate files.

Crates Index

This section discusses crates index, which is similar to crates IO but has more features than just data definitions.

Understanding Crates as an Abstract Concept

- A crate is an abstract concept that maps directly to one of the files in the index and has no information except for a list of versions.

- Every version has fields such as name, version, dependencies, checksum, features, links, yanked status and download URL.

Special Implementations Used by Cargo

- Cargo uses special implementations or types like intern string or small string to avoid overhead when parsing fields for every dependency version.

- To be used by cargo, our library would have to be generic over string type so that cargo can choose its own optimized string types rather than being forced to use strings.

Digging into Code on Cargo Side and Crates.io Side

This section explores the code on the cargo side and crates.io side to see where this stuff lives and to explore the code a little bit.

Understanding the Life Cycle from Publish to Consume

- The life cycle of a crate involves publishing it, talking to the registry, resolving dependencies, downloading relevant crate files and building.

- [](t=0:21:52 t:1312s) The indexes are responsible for hosting a list of versions and dot crate files that have checksums matching the entries.

- [](t=0:22:17 t:1337s) When cargo talks to a registry, it mainly constructs a list of available versions by parsing each line of the file, then runs the resolver to figure out which version among these should be chosen based on the dependency declaration in your cargo toml.

- [](t=0:23:15 t:1395s) Cargo sees that you have a dependency on zip. It looks at the index and picks one version of zip. Then it looks at its dependencies and keeps doing that until it’s resolved your entire dependency tree before fetching all crate files.

- [](t=0:24:00 t:1440s) Crates index is similar to crates IO but has more features than just data definitions. A crate is an abstract concept that maps directly to one of the files in the index and has no information except for a list of versions.

- [](t=0:25:01 t:1501s) To be used by cargo, our library would have to be generic over string type so that cargo can choose its own optimized string types rather than being forced to use strings.

Introduction

In this section, the speaker discusses the suitability of Jason for stream dependency resolution.

Jason and Stream Dependency Resolution

- Jason is not very suited for stream dependency resolution because you have to parse the whole Json before even knowing the dependencies list.

- However, in practice, it doesn’t really matter because the entries in the registry are all very short.

- Realistically, it’s only your direct dependencies that are listed here so like there aren’t really projects that have like thousands of direct dependencies.

- Having a format that’s relatively easy to work with is probably worthwhile here.

Publishing Crate Files

In this section, the speaker explains how crate files are published and how information about their dependencies is included.

Including Dependencies Information

- The information about a crate’s dependencies is included in its cargo Tomo file.

- When crates.io receives a crate file, it includes the Json which says there’s a dependency on Rand 0.8.

- It gets that information from your cargo terminal so it’s redundant but it’s also the same like cargo will derive one from the other.

Hosting Index on GitHub

In this section, the speaker explains why they decided to host an index on GitHub and why they developed sparse registries.

Git vs HTTP-based API

- The reason why they hosted an index on GitHub was mostly because it’s straightforward.

- Checking out the index locally is just a git clone and you can get efficient Delta updates by doing a git pull.

- However, as Craterial grew larger, using an HTTP-based API became more scalable. This led to developing sparse registries.

Overview of Git Index and Cargo

This section provides an overview of the Git index and how it is used in Cargo.

Canonical Path for Git Index

- The hash in the canonical path for the Git index is a hash of the URL of the crates.io index.

- If an alternate registry is used, they will end up with a different hash here.

- This is how Cargo differentiates them.

Structure of Git Repository Managed by Cargo

- The

dot-cratefiles are held incache. - Extracted versions of every crate file are held in

source. - The actual index itself is held in

index.

Logic Around Publish Command

- Definitions for all commands live in

source/bin/cargo. - The definitions for publish command live inside this directory.

- The Ops module inside

source/cargo/opsholds the definitions of all these commands. - It lets you cleanly separate out things that have to do with CLI versus actual logic.

Code Structure of Cargo

This section provides an overview of the code structure of Cargo.

Source Directory

- Majority of cargo’s stuff lives here.

- Holds utility crates which includes crates.io crate.

Publish Command Code

- Definitions for all commands live in

source/bin/cargo. - Definitions for publish command live inside this directory.

- Exec function is the entry point for executing that command.

Ops Module

- Holds definitions for all these commands.

- Lets you cleanly separate out things that have to do with CLI versus actual logic.

Understanding the Cargo Publish Process

This section explains the process of publishing a crate using Cargo.

The Definition of Publish

- “Publish” is the code that executes when you run

cargo publishafter parsing all the registry or arguments. - It mainly finds and parses your cargo config and workspace manifest, looks over members, and checks which registry you want to publish to.

- If it’s a dry run, it doesn’t do anything else. Otherwise, it constructs an operation to send to the Craterial registry.

Package One

Cargo packagegenerates the crate file, which is a tarball.- The result is a

.cratefile that gets uploaded to crates.io bytransmit. - If there are Git dependencies in your crate, they will not be permitted.

Transmitting Payload

Transmitsends both the generated JSON and.cratefile as payload to crates.io.- It computes a list of dependencies, generates new crate dependency types that describe each of them, parses out the manifest and readme files, looks at license files and ultimately constructs one of these new crate things with all this information extracted from the manifest.

- Registry.publish uses curl to actually send the payload.

Waiting for Crate Availability

- When you run

cargo publish, there’s logic on crates.io side that has to happen before your version becomes available. - This loop waits until your version is actually in the index and available for others so that if someone tries to use it immediately after you’ve published it, they won’t get an error message.

Introduction

In this section, the speaker apologizes for a recent change to their chat block and discusses the crates IO side of things.

Chat Block Change

- The speaker recently set up a 10-minute follow chat block due to spammers.

- However, it can be sad when people want to raid.

Crates IO Side

- The speaker discusses where they received a JSON payload.

- They explain how Source controllers crate publish handles puts to crates new used by cargo published to publish a new crate.

- The speaker mentions that the request is split into parts using the length of the JSON and tarball.

- They discuss how metadata is checked and an entry is constructed in the database based on information from the JSON metadata.

- The speaker explains how S3 uploads are handled and how crates are registered in local git repo.

Definitions

In this section, the speaker talks about different definitions for similar things across various parts of the ecosystem.

Cargo Registry Index

- The speaker explains that all parts of the ecosystem have different definitions for similar things.

- They mention that current registry index has a definition of crate which is what ends up going in the index.

Custom Registries

In this section, someone asks about support for custom registries other than Crate.io.

Support for Custom Registries

- The speaker says that custom registries are supported but cross-registry dependencies are not allowed.

Implementing a Cargo Registry

This section discusses the challenges of implementing a registry for Cargo, including the limitations of using git as a registry and the lack of support for authentication.

Challenges with Alternate Registries

- Implementing an alternate registry is challenging because they are forced to use git as a registry, which can be expensive and cumbersome.

- Sparse registries make it easier to implement your own registry based on existing infrastructure.

- Authentication support for private registries is limited, making it difficult to control who has access to your registry. Efforts are being made to improve this.

Alternative Registries

- There are several alternative registries available, such as gitty, Artifactory Muse Alexandria, and others.

Naming the Library

- The library will contain types for interacting with a cargo registry but not all types in cargo. Possible names include “cargo index,” “cargo index schema,” or “registry schema index schema.”

- The appropriate name may be “Cargo index interface” since it applies to any cargo registry.

Naming the Cargo Index Interface

The speaker discusses possible names for the cargo index interface.

- Possible names include “interface for cargo indexes,” “cargo index schema,” and “cargo index types.”

- The speaker struggles with finding a suitable name and considers using a thesaurus to find synonyms.

- They eventually settle on “cargo index Transit” as it relates to the idea of transit points and all necessary transits needed to interact with a cargo index.

Adding Features to Cargo Home

The speaker explains how they add features to their local directory config instead of their system-wide config.

- They override said beta and add it to their local directory config instead of their system-wide config.

- This is because it’s not unstable yet, so adding it to the system-wide config would cause build failures in packages that don’t have the feature.

- By adding it locally, they get it only for the local one.

Defining Publish Phases

The speaker defines different phases involved in publishing crates.

Crate.io Definition

- There are two primary definitions: one in crates.io and another in models.

- Dependencies come from models dependency kind and keyword.

- A keyword is used, which was previously called crate keyword.

- Chrono native time may be used but remains uncertain.

Index Definition

- In the index definition, there are dependencies on sember, which has semantic versioning versions definitions.

Rust Crate Serialization

In this transcript, the speaker discusses the process of creating a foundational crate for Rust that can be used for serialization and deserialization. They discuss the different types of crates that will need to be included in this foundational crate and how they will need to be organized.

Creating a Foundational Crate

- The speaker discusses what is missing from the foundational crate.

- They mention that encodable crate version wreck needs to be included.

- Serialize also needs to be included.

- Both serialize and deserialize will need to be included because both are necessary.

- The speaker explains why all source definitions should be in one file.

- They discuss validation on deserialize and how it ensures that the type conforms with rules for strings.

- The speaker mentions that they will want to include validation as well.

Cargo vs Crates.IO

- The speaker notes an issue with two implementations of serialize.

- They suggest being generic over types so cargo can choose which version to use.

- There may end up being a lot of generics, which is not ideal.

- The speaker suggests avoiding encoding too much stuff in this crate so it remains foundational.

Index Entries

Summary New Function

In this section, the speaker discusses the summary new function and where it is called from in the codebase.

Finding Summary New

- The speaker looks for where

summary newmight be called from in the codebase. - They find a long function called

process dependenciesthat seems promising. - The speaker then finds a definition for

registry package, which seems to be what they are looking for.

Intern String and Generic Types

- The speaker explains that Cargo uses

intern stringto de-duplicate allocations of strings. - They note that if we want Cargo to use these definitions, we will need to make them generic over these things.

Registry Dependency and Cow Types

- The speaker looks at the definition of

registry dependency. - They note that some fields use cow types, which can save on allocations when decoding JSON.

Add Crate Job

In this section, the speaker discusses how crates.io serves its index and how it handles adding new crates.

Perform Index Add Crate

- The speaker looks for where something handles adding crates in the codebase.

- They find a job that regularly does git commits but are unable to find where it calls

perform index add crate.

Search-Based Definitions

- The speaker notes that definitions on crates.io are entirely search-based at present.

- They explain how searching works on crates.io and how it guesses which results are definitions versus usages.

Overall, this transcript covers the speaker’s exploration of the codebase for Cargo and crates.io, focusing on

how summary new is called and how crates are added to the index. The speaker also discusses some implementation

details such as intern string, cow types, and search-based definitions.

Dependency Ordering and Cargo Manifests

In this section, the speaker discusses dependency ordering and cargo manifests. They explore the implementation of ordering for dependencies and look at a definition of dependency kind.

Dependency Ordering

- The speaker notes that there is an implemented ordering for dependencies.

- They wonder why this is implemented.

Definition of Dependency Kind

- The speaker notes that they will grab two definitions of dependency kind.

- They note that the second definition of dependency kind is not used elsewhere in the crates IO code base.

- The speaker notes that one definition of dependency kind isn’t used.

- They mention that they have obtained the necessary definition.

Cargo Manifest Format

- The speaker considers taking a dependency on cargo to extract the crate file and parse its contents to generate published JSON but decides against it due to circular dependencies.

- Using cargo for this purpose would mean that cargo cannot take a dependency on them.

- The simplified version of the cargo manifest format is what they need, which appears in the generated cargo Tamil file.

- They search for where this simplified manifest is defined.

Tomo Manifest Type

- The speaker finds out that tomorrow manifest type is used to deserialize Cargo Tomo files, meaning there isn’t a separate type for just the simplified manifest.

Simplifying the Toml Manifest

In this section, the speaker discusses simplifying the Toml manifest by removing unnecessary fields.

Removing Unnecessary Fields

- The definition of the simplified manifest should only include bits that are actually generated.

- Some fields like project, Dev dependencies two, build dependencies two, patches none, workspace none and badges can be removed as they are always set to none or deprecated.

- Workspace dependency can be filtered out since it is never used in Tamil dependencies.

- Map depth thing removes anything that is a workspace dependency.

Verifying Tarball

In this section, the speaker discusses verifying tarball and checking if dependencies are available in the registry.

Checking Dependencies

- The verify tarball function checks if all dependencies are available in the registry.

- Create metadata seems separate from cargo Tamil.

Cargo Tamil Manifest Definition

In this section, the speaker discusses the definition of a Cargo Tamil manifest and its various components.

Components of a Cargo Tamil Manifest

- The “maybe workspace field” can be replaced with the inner type, which is either defined or not.

- The speaker expresses enthusiasm for using “string or bull string or VEC.”

- The Tamil crate is needed and can be installed via

cargo index Transit car cargo ad Tamil. - Dev dependencies 2 and build dependencies 2 do not get set.

- Replace does not get set, patch and workspace do not get set.

- Detailed Tamil dependency must be grabbed.

- There are no simple dependencies; only detailed dependencies exist.

- The speaker notes that foreign strings are present in the manifest definition.

- Parameter p is never used and can be removed.

- A sub-module called Deezer is created to bring in sturdy helpers.

Mapping Dependencies

In this section, the speaker discusses how dependencies are mapped in a Cargo Tamil manifest.

Mapping Dependencies

- All detailed Tamil dependencies are called map depths, which calls map dependency.

- Map dependency maps anything that’s detailed and removes path, Git, branch, tag, Rev. It changes the registry index but leaves everything else alone.

- Simple dependencies are turned into detail dependencies.

Cleaning up the Code

In this section, the speaker is cleaning up the code and removing unnecessary types that won’t be used in the final upload.

Removing Unnecessary Types

- The speaker removes unnecessary types such as

Tomo profilesandTomo targets. - The speaker examines

path valueand decides to keep it since it holds a path buff but is serialized and deserialized as a string. - The speaker considers pruning out all things that don’t go in the upload, including all of the targets and profile.

Pruning Down Definitions

- The speaker prunes down definitions by removing all things that don’t go in the upload, including all of the targets and profile.

- The speaker keeps

Tamil packagebecause it defines the actual cargo package like name and version.

Understanding the Cargo Manifest

In this section, the speaker discusses various fields in the Cargo manifest and their relevance.

Fields in the Manifest

- The

buildfield is not currently relevant and can be ignored. - The

meta buildfield is not present in the manifest. - The

seal referencesfield is not discussed further. - The

packagefield contains information about the package being built. - The

exclude,include, andworkspacefields are not relevant to the registry or resolver. - The

metadatafield is not published and does not need to be parsed. - The

artifactandlibfields are also not important to the resolver or registry.

Public/Private Dependencies

- The speaker discusses an RFC proposing a way to mark dependencies as private so they do not leak. This would help with backwards compatibility issues.

Parsing TOML Manifests

In this section, the speaker discusses parsing TOML manifests and removing unnecessary fields.

Removing Unnecessary Fields

- The speaker suggests that some fields can be removed since they are not relevant to the registry.

- Exclude and include fields are only used by cargo package so they can be removed once cargo packages run.

- Default run is also not relevant to the registry and can be removed.

- Metadata is still relevant, so it should be kept for now.

Parsing TOML Manifests

- The speaker mentions that only string or bool is needed for readme.

- Vex string or bull is not needed anymore.

- Version trim white space is still in use.

Understanding the Version Encoding

This section discusses the different instances of version encoding and how they are used in crates.io and cargo.

Version Encoding

- There are multiple instances of version encoding that need to be dealt with.

- The crates index crate has its own version encoding, which is optimized for compactness since it is used to process crates in batch.

- Crates.io uses a different version encoding than the crates index crate, while Cargo does not use any specific version encoding.

- The dependency kind also has its own custom implementation of decoding and validation.

Parsing Metadata

This section discusses parsing metadata using the cargo metadata command and the cargo metadata crate.

Cargo Metadata